Final month John Shehata from NewzDash revealed a weblog publish documenting a study overlaying the affect of syndication on information publishers. For instance, when a writer syndicates articles to syndication companions, which website ranks and what does that appear like throughout Google surfaces (Search, Google Information, and so forth.)

The outcomes confirmed what many have seen within the SERPs over time whereas working at, or serving to, information publishers. Google can typically rank the syndication associate versus the unique supply, even when the syndicated content material on associate websites is appropriately canonicalized to the unique supply.

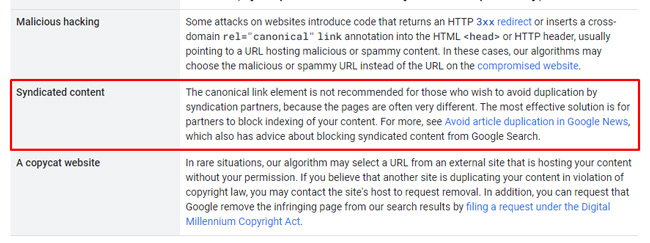

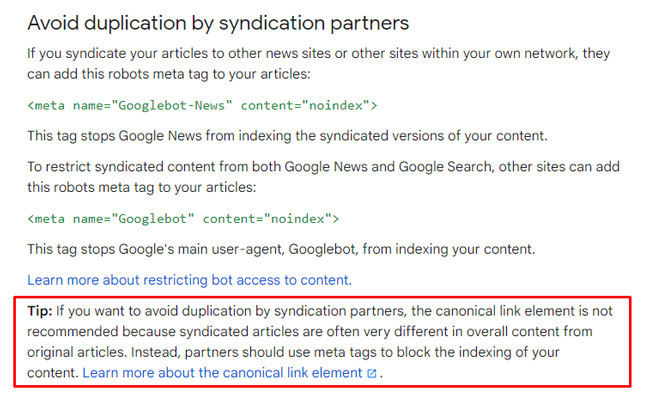

And as a reminder, Google updated its documentation about canonicalization in Might of 2023 and revised its suggestion for syndicated content material. Google now absolutely recommends that syndication companions noindex information writer content material if the writer doesn’t need to compete with that syndication associate in Search. Google defined that rel canonical isn’t ample because the unique web page and the web page situated on the syndication associate web site can typically be totally different (while you take your entire web page into consideration together with the boilerplate, different supplementary content material, and so forth.) Subsequently, Google’s methods can presumably have a tough time figuring out that it’s the identical article being syndicated after which rank the improper model, and even each. Extra on that scenario quickly once I cowl the case research…

And right here is data from Google’s documentation for information publishers about avoiding duplication problems in Google News with syndicated content material:

Beforehand, Google has mentioned you may use rel canonical pointing to the unique supply, whereas additionally offering a hyperlink again to the unique supply, which ought to have helped their methods decide the canonical url (and unique supply). And to be truthful to Google, they did additionally defined prior to now that you may noindex the content material to keep away from issues. However as anybody working with information publishers understands, asking for syndication companions to noindex that content material is a troublesome scenario to get authorized. I received’t bathroom down this publish by overlaying that subject, however most syndication companions really need to rank for the content material (so they’re unlikely to noindex the syndicated content material they’re consuming.)

Your conversations with them would possibly appear like this:

The Case Research: A transparent instance of reports writer syndication issues.

OK, so we all know Google recommends noindexing content material on the syndication associate web site and to keep away from utilizing rel canonical as an answer. However what does all of this really appear like within the SERPs? How dangerous is the scenario when the content material isn’t noindexed? And does it affect all Google surfaces like Search, High Tales, the Information tab in Search, Google Information, and Uncover?

Properly, I made a decision to dig in for a shopper that closely syndicates content material to associate web sites. They’ve for a very long time, however by no means actually understood the true affect. After I despatched alongside the research from NewzDash, we had a name with a number of individuals from throughout the group. It was clear everybody needed to know the way a lot visibility they have been dropping by syndicating content material, the place they have been dropping that visibility, if that’s additionally impacting indexing of content material, and extra. In order a primary step, I made a decision to craft a system to start out capturing information that might assist determine potential syndication issues. I’ll cowl that subsequent.

The Check: Checking 3K just lately revealed urls which can be additionally being syndicated to companions.

I took a step again and commenced mapping out a system for monitoring the syndication scenario the very best I may primarily based on Google’s APIs (together with the Search Console API and the URL Inspection API). My purpose was to grasp how Google was dealing with the most recent three thousand urls revealed from a visibility standpoint, indexing standpoint, and efficiency standpoint throughout Google surfaces (Search, High Tales, the Information tab in Search, and Uncover).

Right here is the system I mapped out:

- Export the most recent three thousand urls primarily based on the Google Information sitemap.

- Run the urls by means of the URL Inspection API to verify indexing in bulk (to determine any sort of indexing concern, like Google selecting the syndication associate because the canonical versus the unique supply). If the pages weren’t listed, then they clearly wouldn’t rank…

- Then verify efficiency information for every URL in bulk through the Search Console API. That included information for Search, the Information tab in Search, Google Information, and Uncover.

- Based mostly on that information, determine listed urls with no efficiency information (or little or no) as candidates for syndication issues. If the urls had no impressions or clicks, then perhaps a syndication associate was rating versus my shopper.

- Spot-check the SERPs to see how Google was dealing with the urls from a rating perspective throughout surfaces.

No Rhyme or Motive: What I discovered disturbed me much more than I believed it will.

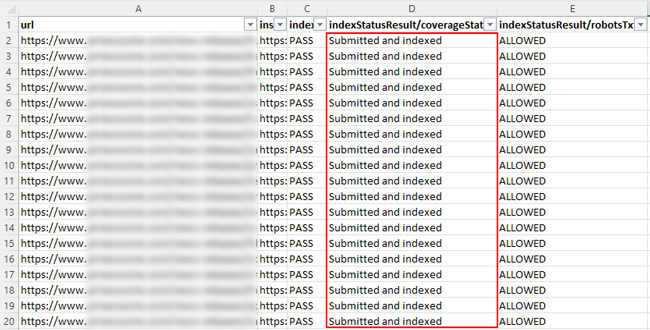

First was the indexing verify throughout three thousand urls, which went very properly. Nearly the entire urls have been listed by Google. And there have been no examples of Google incorrectly selecting syndication companions because the canonical. That was nice and stunned me a bit. I believed I might see that for no less than a number of the urls.

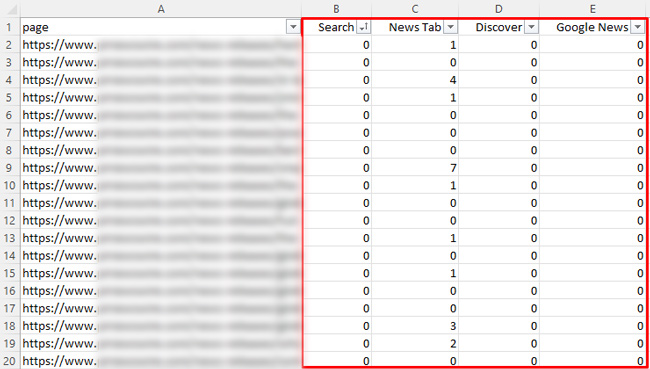

Subsequent, I exported efficiency information in bulk for the most recent three thousand urls. As soon as exported, I used to be capable of isolate urls with little or no, or no, efficiency information throughout surfaces. These have been nice candidates for potential syndication issues. i.e. If the content material yielded no impressions or clicks, then perhaps a syndication associate was rating versus my shopper.

After which I began spot-checking the SERPs. After checking a lot of queries primarily based on the listing of urls that have been flagged, there was no rhyme or cause why Google was surfacing my shopper’s urls versus the syndication companions (or vice versa). And to complicate issues much more, typically each urls ranked in High Tales, Search, and so forth. After which there have been instances one ranked in High Tales whereas the opposite ranked in Search. And the identical went for the Information tab in Search and Google Information. It was a large number…

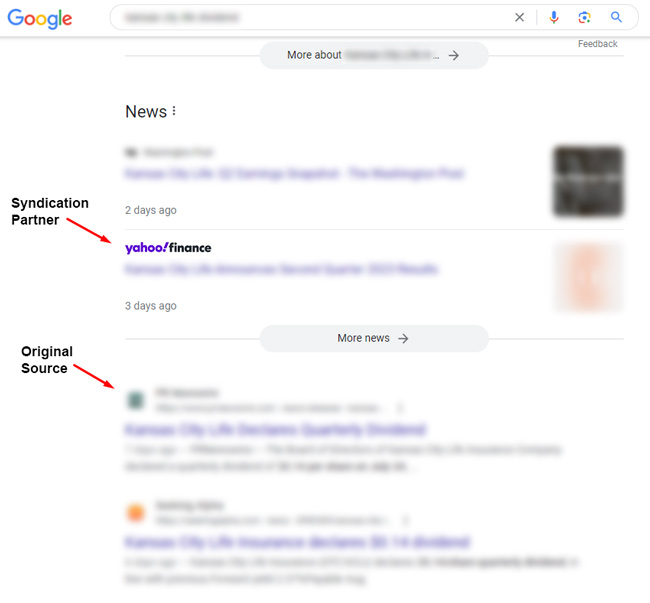

I’ll present a fast instance under so you’ll be able to see the syndication mess. Word, I needed to blur the SERPs closely within the following screenshots, however I needed to offer an instance of what I discovered. Once more, there was no rhyme or cause why this was taking place. Based mostly on this instance, and what I noticed throughout different examples I checked, I can perceive why Google is saying to noindex the urls downstream on syndication companions. If not, any of this might occur.

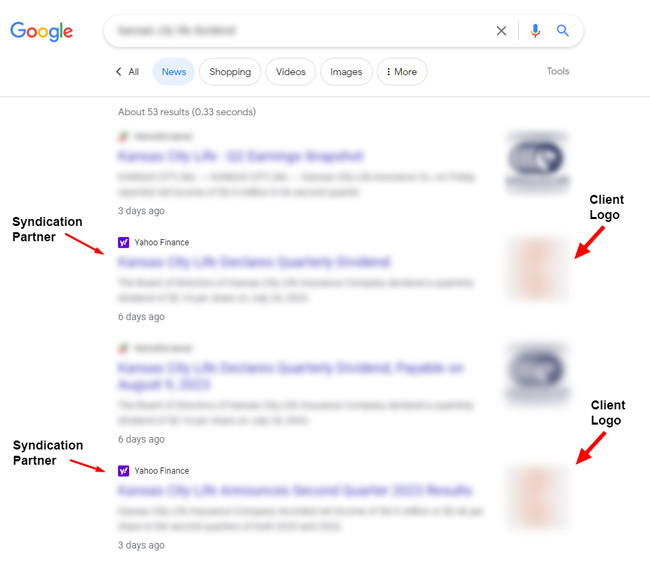

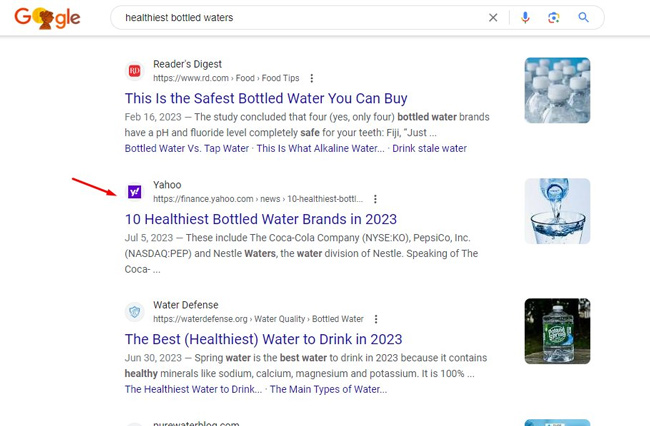

First, right here is an instance of Yahoo Finance rating in High Tales whereas the unique ranks in Search proper under it:

Subsequent, Yahoo Information ranks twice within the Information tab in Search (which is a crucial floor for my shopper), whereas the unique supply is nowhere to be discovered. And my shopper’s brand is proven for the syndicated content material. How good…

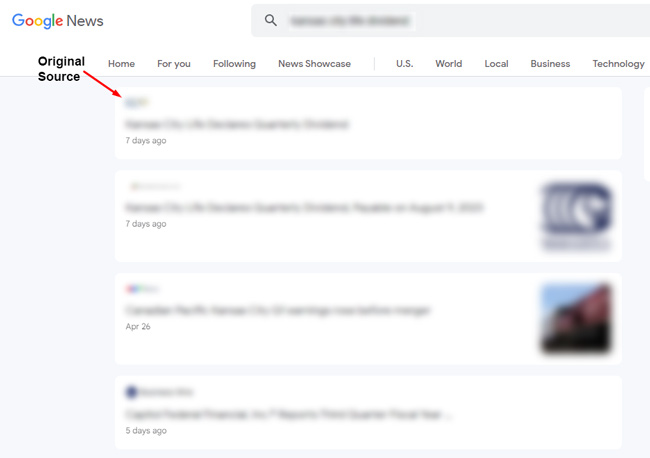

After which in Google Information, the unique supply ranks and syndication companions are nowhere to be discovered:

As you’ll be able to see, the scenario is a large number… and good luck attempting to trace this regularly. And the misplaced visibility throughout 1000’s of pages per thirty days may actually add up… It’s arduous to find out the precise variety of misplaced impressions and clicks, however it may be big for big information publishers.

Uncover: The Customized Black Gap

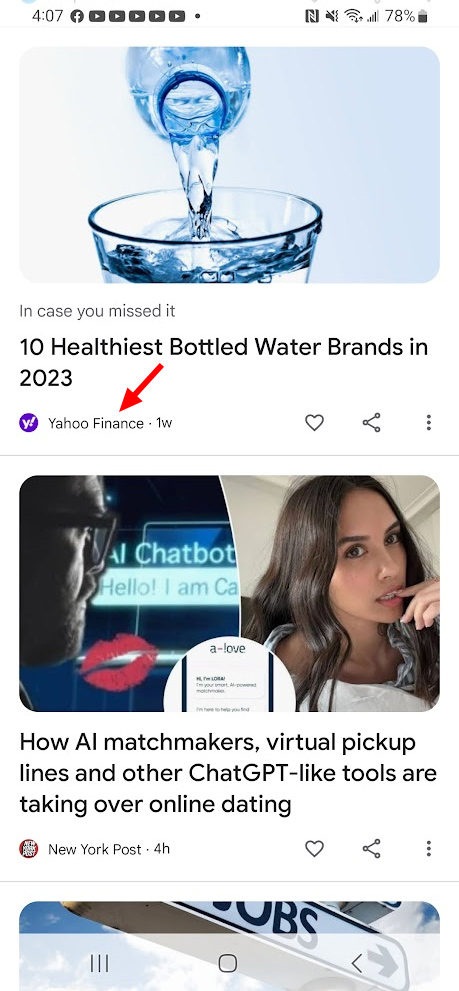

And relating to Uncover, it’s powerful to trace misplaced visibility there because the feed is customized and you may’t probably see what each different individual is seeing in their very own feed. However you would possibly discover examples within the wild of syndication companions rating there versus your individual content material. Under is an instance I discovered just lately of Yahoo Finance rating in Uncover for an Insider Monkey article. Word, Insider Monkey is not a shopper and not the positioning I’m overlaying within the case research, but it surely’s instance of what can occur in Uncover. And if that is taking place quite a bit, the positioning could possibly be dropping a ton of site visitors…

Right here is Yahoo Finance rating in Uncover:

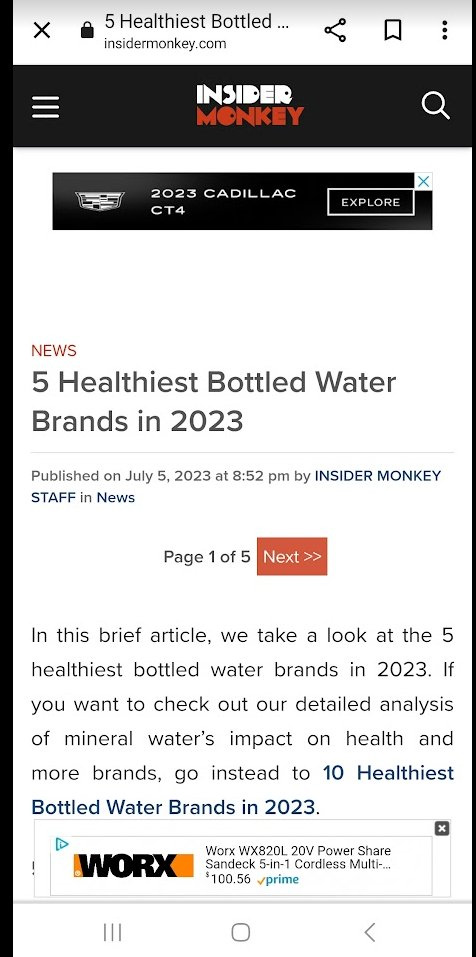

And right here is the unique article on Insider Monkey (but it surely’s in a slideshow format). This instance reveals how Google can see the pages are totally different, which might trigger issues understanding that they’re the identical article:

And right here is Yahoo Finance rating #2 for the goal key phrase within the core SERPs. So the syndication associate is rating above the unique within the search outcomes:

Key factors and suggestions for information publishers coping with syndication issues:

- First, attempt to perceive indexing and visibility issues the very best you’ll be able to. Use an method like I mapped out to no less than get a really feel for a way dangerous the issue is. Google’s APIs are your pals right here and you may bulk course of many urls in a brief time frame.

- Weigh the dangers and advantages of syndicating content material to companions. Is the extra visibility throughout companions price dropping visibility in Search, High Tales, the Information tab in Search, Google Information and Uncover? Keep in mind, this might additionally imply a lack of highly effective hyperlinks as properly… For instance, if the syndication associate ranks, and different websites hyperlink to these articles, you’re dropping these hyperlinks.

- If wanted, speak with syndication companions about probably noindexing the syndicated content material. This can in all probability NOT go properly… Once more, they typically need to rank to get that site visitors. However you by no means know… some is perhaps happy with noindexing the urls.

- Perceive Uncover is hard to trace, so that you is perhaps dropping extra site visitors there than you suppose (and perhaps quite a bit). You would possibly catch some syndication issues there within the wild, however you can’t merely go there and discover syndication points simply (like you’ll be able to with Search, High Tales, the Information tab, and Google Information).

- Instruments like Semrush and NewzDash may also help fill the gaps from a rank monitoring perspective. And NewzDash focuses on information publishers, in order that could possibly be a beneficial device in your monitoring arsenal. Semrush may assist with Search and High Tales. Once more, attempt to get a strong really feel for visibility issues resulting from syndicating content material.

Abstract – Syndication issues for information publishers is perhaps worse than you suppose.

If you’re syndicating content material, then I like to recommend attempting to get an understanding of what’s occurring within the SERPs (and throughout Google surfaces). After which type a plan of assault for coping with the scenario. That may embody preserving issues as-is, or it’d drive modifications to your syndication technique. However step one is gaining some visibility of the scenario (pun meant). Good luck.

GG

#Noindexing #Syndicated #Content material